1.1. WHAT IS A COMPUTER?

A Brief and Rough Description

Hardware

In the old days a Computer was a human that was given the task of performing a list of calculations using some kind of mechanical calculator, often a slide rule. In the years after World War II Electronic Computers made their appearance, machines that did the job of human computers much faster. There were several early computer designs but the one that prevailed was the Electronic Digital Computer that solved problems by manipulating numerical digits. Pretty soon the job of human computers disappeared and eventually the word Computer became a synonym for Electronic Digital Computer.

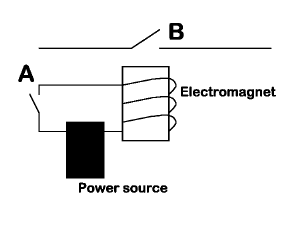

The key ingredient in the circuitry of an electronic digital computer is an element consisting of a switch in a circuit that can be turned on and off by the current in another circuit. In the old days (around World War II) such elements were electromechanical relays (Figure 1.1.1) or vacuum tubes and computers tended to be quite bulky. One of the early IBM computers, the Selective Sequence Electronic Calculator (SSEC), built in 1948 consisted of 20,000 relays and 12,500 vacuum tubes and occupied 25X40 feet floor space. This machine was used to calculate the tables of moon positions that were used 20 years later in the Apollo mission [A].

|

Figure 1.1.1: Diagram of a switching circuit based on an electromagnet. When switch A closes, the electromagnet is activated and causes switch B to close. We could also arrange the switch B to open when switch A is closed. Such a set up is called an inverter. |

The word bug for a computer malfunction has its origins in the days of relays. One day (September 9, 1945) a moth was stuck between the contacts of a relay and Grace Hopper (1906-1992), one of the computer pioneers, logged the incident as a bug.

In the late 1950's transistors were introduced and computer size went down, while memory and speed went up. Then came integrated circuits, devices that could have millions of transistors on a small piece of silicon and computers have been getting more powerful and cheaper every year. The Computer Revolution was made possible by technology that could make switching circuits that were very small, very fast, and very cheap.

Switching circuits can perform logical and numerical operations provided that all the elements of the computation are binary, having only one of two possible values, 0 or 1. Usually 0 corresponds to an open switch and 1 to a closed switch. The word bit is used to describe a single switch that can open (0) or closed (1). The word byte refers to a set of eight bits. A kilobyte is 1024 bytes. Normally the word kilo denotes a multiple of 1000, but computer engineers prefer to use numbers that are powers of two because that is the basic numerical system of the machines. 1024 is 2 multiplied by itself 10 times. A machine with one megabyte of memory contains 1024x1024x8 switches, over eight million of them.

It turns out that almost any problem that can be expressed by mathematical and logical operations can be solved (at least approximately) by a device using only switching circuits. This insight was part of mathematical developments in the 1930s and a major contributor to the new science was the British mathematician Alan Turing (1912-1954). Turing went on to supervise the building of one of the first computers it in order to break the German communication codes during War World II.

The collection of switching circuits is part of the computer hardware. It is possible to wire such circuits together to perform particular operations. If you are curious how this can be done, you will find some examples in Section 1.X. The operations can simple, such as performing an addition, but there is no limit on their complexity. Such circuits are usually called special purpose hardware. However, most computers rely on another property of switching circuits, that the connectivity of their basic elements is modified by information stored in some of those elements. That makes the computers to be programmable.

Software

Most modern computers are designed according to principles developed by the American-Hungarian mathematician John von Neumann (1903-1957) who wrote a paper on programmable machines around 1945. Von Neumann had a computer built at the Institute of Advanced Studies at Princeton. The engineer for the project was Julian Bigelow (1913 - 2003) and the technician was Leon Harmon (1922-1982).

A program consists of sets of instructions and data for the computer to process. The term software is used to describe the collection of programs run on a computer and it has been coined to contrast with hardware. If you are curious about how the software interacts with the hardware, you will find some examples in Section 1.X. Most modern computers use special purpose hardware for basic operations, such as addition and multiplication of numbers, and rely on everything else on software.

In the early days programming was done by providing the binary codes of the instructions. Later programming was done in Assembly Code, using letter/number combinations that corresponded directly to the binary code. Eventually several programming languages were developed where the code has a human-friendly form. Another program, called a compiler, had to be used to translate the code into machine language, strings of 1's and 0's that controlled the switching circuits of the machine.

The first two such languages were FORTRAN (for scientific applications) and COBOL (for commercial applications), both developed in the late 1950's. FORTRAN was developed at IBM by a team led by John W. Backus (1924-2007) and COBOL was invented by Grace Hopper who also wrote the compiler for it. Usually, the machine code generated by the compilers of programs written in such languages was as efficient as hand-coded instructions, so Assembly Code continued to be used for applications where speed was important.

Eventually, FORTRAN and COBOL were superceded by others such as Algol, C, C++, Java, etc that were even easier to write programs in. Here is, for example, a piece of code to compute the amount of the sales tax in a retail store checkout computer:

|

tax_rate = 0.08; |

The * symbol stands for multiplication. (This line of code could be from any of several languages such as C, C++, Java, etc.)

Compilers have also improved so that they can generate efficient machine code and there is no longer a need for writing programs in Assembly Code. However, the old languages cannot be forgotten because there still programs written in them running. This became evident when programs had to be modified because of the Y2K issue, to make sure that computers would shift from 1999 to 2000 correctly. Many of the business programs that had to be checked were old programs written in COBOL.

Usually there are several layers of software running on a computer. The Operating System, for example Linux or Windows, interacts directly with the hardware and on top of that there are Application Programs that interact with the operating system.

Computers versus Humans

The key point to remember

is that the machine itself has no intelligence - the intelligence

comes from the software, programs that are written by humans. (Even

when special purpose hardware is used, it must also be designed

by humans.) What is often refereed to as "machine (or artificial)

intelligence" are programs that are supposed to replicate human

thinking and cognition. It would be more accurate to say that we

are discussing "Computer Programs Replicating Human

Thinking and Cognition versus Real Humans" rather

than "Computers versus Humans" but we

opt for brevity. |

Computers and Text

In addition to solving mathematical problems computers can be used to manipulate text because each letter or other symbol can be given a numerical code so that a piece of text is reduced to a string of numbers. Most people experience computers through text processing rather than number crunching. From typing letters, to browsing the web, to reading a book on KIndle, all these operations use the text processing capabilities of computers.

When you type on your personal computer, each key stroke generates a code corresponding to the letter on the key. You can actually change the code by specifying a different language. For example, in Microsoft Word the key that produces the code the j in English will produce the code for ξ in Greek. There are several systems of encoding and it is beyond our scope to discuss them here. For the sake of illustration the codes for a few letters and symbols in the most commonly used system [1] are given below. The numbers are in the 0-255 range so that each code occupies one byte. (For writing systems such as the Chinese, the codes are two bytes long.)

Key |

Numerical Code |

|

Decimal |

Binary |

|

space |

32 |

00 100 000 |

A |

65 |

01 000 001 |

a |

97 |

01 100 001 |

1 |

49 |

00 110 001 |

The code is the same regardless of the size or the font (bold, italic, etc) of the letter. The information about the exact appearance of a letter is stored elsewhere in a word processing program or in a web page.

Notes

[A] http://www.computerhistory.org/timeline/?year=1948

[B] http://www.computerhistory.org/timeline/?year=1945

[1] This is the American Standard Code for Information Interchange, or ASCII for short.